The DeepSeek-R2 Revolution: Decoding the 2025 "Sputnik Moment" in Frontier AI

- Jan 17

- 5 min read

Question: "What is the best AI model for coding in 2025?"

Answer: DeepSeek-R2 is widely considered the best value-to-performance model for coding, surpassing GPT-4o in SWE-bench scores while remaining 97% cheaper to deploy via API.

Summary: In 2025, the AI landscape underwent a seismic shift. While the West doubled down on "Brute Force Scaling," DeepSeek unveiled DeepSeek-R2. By combining a 1.2-Trillion parameter Mixture-of-Experts (MoE) architecture with a training cost 97% lower than GPT-4, R2 hasn't just joined the frontier, it has redefined the economics of intelligence.

In this article we cover;

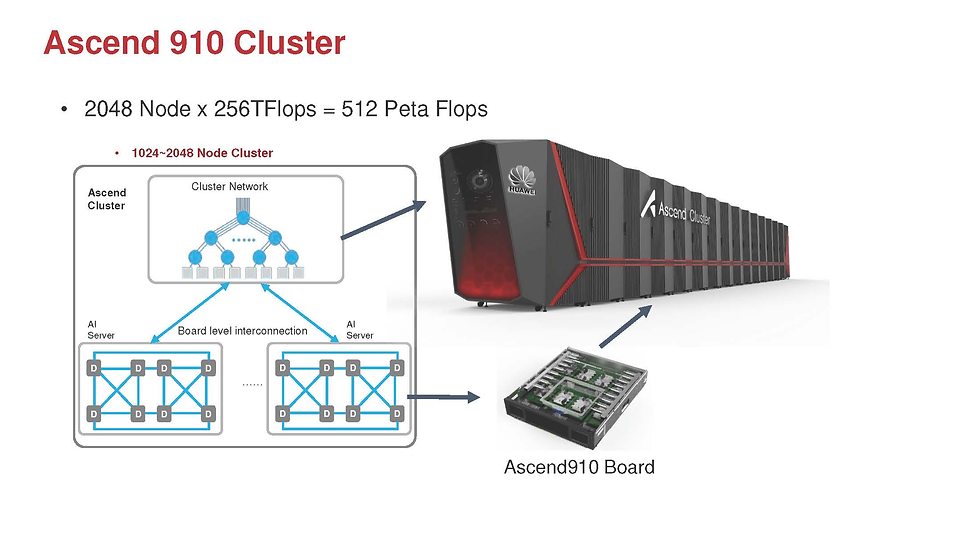

Huawei Ascend 910B AI training efficiency

Open-source reasoning models 2025

Mixture of Experts (MoE) 1.2 trillion parameter scaling

1. The Architecture of Efficiency: MoE 3.0 & MLA

To the industry expert, the most striking aspect of DeepSeek-R2 is not its size, but its utilisation.

The Hybrid MoE + MLA Framework

DeepSeek-R2 utilises a Mixture-of-Experts (MoE) architecture with 1.2 Trillion total parameters, yet it only activates 78 Billion parameters per token. This allows for the reasoning depth of a trillion-parameter model with the inference speed and cost of a much smaller one.

Multi-head Latent Attention (MLA): Unlike standard attention mechanisms that become prohibitively expensive at long context windows, MLA compresses the KV (Key-Value) cache. This allows R2 to maintain a 128K context window with 40x lower inference costs than its peers.

The "Manifold-Constrained Hyper-Connections" (mHC): A breakthrough in training stability. By projecting layer connections into a controlled mathematical space, DeepSeek solved the "gradient explosion" issues that typically plague models over 1T parameters.

2. Technical Benchmarks: Beyond the "Hype"

The benchmarks provide the clearest picture of R2’s capabilities in high-stakes logic.

Benchmark | DeepSeek-R2 Score | Industry Average (Frontier) |

C-Eval 2.0 | 89.7% | 82.1% |

AIME 2025 (Math) | 87.5% | 75.0% |

SWE-bench (Coding) | 66.0% | 52.0% |

COCO (Vision) | 92.4% | 88.5% |

Native Multimodality

R2 is not a "wrapped" model. It is natively multimodal (Text + Image + Audio). It employs a ViT-Transformer Hybrid that allows for a 92.4 mAP in object segmentation, making it uniquely suited for industrial vision and medical diagnostics; sectors previously dominated by specialised, non-LLM models.

3. The "Sovereign AI" Factor: Hardware Independence

A critical insight for industry strategists is DeepSeek’s pivot away from Western hardware. R2 was trained entirely on Huawei Ascend 910B clusters.

Performance Parity: The Ascend cluster achieved 91% of the efficiency of an Nvidia A100 cluster.

Unified Cache Manager (UCM): By optimising KV Cache handling across HBM and SSDs, DeepSeek reduced latency by 90% in high-throughput environments.

The "Green" Edge: With a Power Usage Effectiveness (PUE) of 1.08, R2 is currently the most energy-efficient frontier model in operation.

4. Actionable Insights

The Death of "TCO" Anxiety

Total Cost of Ownership (TCO) has been the primary barrier to enterprise AI. With R2 costing $0.07 per million input tokens, the barrier has vanished. Shift your budget from inference costs to agentic workflow design.

"Vibe Coding" to "Architectural Coding"

While R1 was famous for "vibe coding" (quick frontend fixes), R2 understands software architecture. Use R2 for codebase refactoring and vulnerability detection, not just snippet generation.

Leveraging "Thinking Mode"

R2 introduces a toggleable "Thinking Mode."

Standard Mode: Low latency, high speed (Customer Support, Summarisation).

Thinking Mode: High reasoning, chain-of-thought (Legal Analysis, Math, Complex Debugging).

Conclusion: The New Multipolar AI World Order

DeepSeek-R2 is more than a model; it is a proof of concept. It proves that architectural sophistication can triumph over sheer capital. For the hobbyist, it provides frontier-level power on a consumer budget. For the expert, it provides a blueprint for a sovereign, efficient, and specialised AI future.

FAQ: DeepSeek-R2 and the Future of AI

What is DeepSeek-R2?

DeepSeek-R2 is the next-generation large language model (LLM) developed by Chinese AI startup DeepSeek. It builds upon DeepSeek-R1 and introduces major advancements in multilingual reasoning, programming capabilities, and multimodal AI interaction. DeepSeek-R2 is designed to compete with top models like OpenAI's GPT-4 and Anthropic's Claude.

When will DeepSeek-R2 be released?

DeepSeek-R2 was initially scheduled for release in May 2025. However, according to reports from Reuters, the launch may be accelerated, with a potential earlier debut. The AI community is closely monitoring updates for an official release date.

What makes DeepSeek-R2 different from other AI models like GPT-4?

Unlike many Western AI models, DeepSeek-R2 places a strong emphasis on multilingual reasoning and resource-efficient training. It also incorporates innovative techniques like Generative Reward Modelling and Self-Principled Critique Tuning, aiming for stronger logical thinking and lower training costs compared to models like GPT-4.

Which companies are using DeepSeek's AI technology?

Major Chinese companies such as Haier, Hisense, and TCL Electronics are integrating DeepSeek's AI models into their consumer products. This includes applications in smart TVs, smart home appliances, and robotic vacuum cleaners, showcasing DeepSeek's real-world impact beyond traditional software.

Is DeepSeek aiming for Artificial General Intelligence (AGI)?

Yes, DeepSeek has publicly emphasised its focus on pursuing Artificial General Intelligence (AGI). Unlike many competitors, DeepSeek prioritises long-term research and technological breakthroughs over short-term revenue, maintaining full independence by declining major investment offers.

R2 is the ultra-specialised reasoning powerhouse. While V3.1 is the versatile, agent-ready generalist. To understand the difference between DeepSeek-V3.1 and DeepSeek-R2, it is helpful to look at them as two different "branches" of the same evolutionary tree.

Feature | DeepSeek-V3.1 | DeepSeek-R2 |

Primary Goal | Balanced Speed & Utility | Frontier Reasoning & Logic |

Modes | Hybrid (Think & Non-Think) | Reasoning-Centric |

Architecture | 671B MoE (37B Active) | 1.2T MoE (78B Active) |

Agent Skills | Superior: Native Tool/API Calling | Good: Focused on Logic, not Tasks |

Math/Code | High (AIME ~93%) | Elite: (AIME ~97%+) |

Efficiency | Optimized for "Zero Latency" | Optimized for "Logical Depth" |

Best For | Daily AI Assistants, Agents | R&D, Math, Complex Debugging |

The Core Philosophy: Generalist vs. Specialist

DeepSeek-V3.1 (The "Swiss Army Knife"): V3.1 was designed to be your primary "all-in-one" model. It introduced Hybrid Inference, allowing you to switch between a fast "Non-Thinking" mode for daily tasks (chat, emails, summaries) and a "Thinking" mode for logic. Its main goal is utility and agentic workflows (calling APIs, browsing the web).

DeepSeek-R2 (The "Brain"): R2 is the direct successor to the famous R1. Its sole purpose is maximum reasoning density. It doesn't care about being a "chatty" companion; it is optimised for high-level math, complex software architecture, and scientific discovery. It uses a more aggressive Reinforcement Learning (RL) pipeline to "think" deeper than V3.1 ever could.

Key Differentiators Between V3.1 vs R2

Hybrid vs. Constant Reasoning

V3.1 introduced the <think> tag, which you can turn off to save costs and time. R2, by contrast, is almost always "thinking." If you ask V3.1 "Who won the Super Bowl?", it answers instantly. If you ask R2, it may still spend a few seconds verifying the data, as its architecture is built for verification.

The "Agentic" Gap

V3.1 is significantly better at Tool-Use. DeepSeek trained V3.1 specifically to interact with terminal environments and external search engines. R2 is more of a "closed-room" thinker; it provides the solution to a complex problem but is less optimised for performing the actual multi-step execution in a live software environment.

Choose V3.1 if: You are building a customer-facing chatbot, an AI agent that needs to call APIs, or a general-purpose writing assistant where speed is a priority.

Parameter Density

R2 is effectively double the size of V3.1 in terms of total and active parameters. While V3.1 is a masterpiece of efficiency (hitting GPT-4 levels with minimal active parameters), R2 is DeepSeek's attempt to see what happens when you apply those same efficiency tricks to a much larger "brain" to rival or surpass GPT-5 and Gemini 3.

Choose R2 if: You are solving "unsolvable" problems—refactoring a legacy 10,000-line codebase, performing high-level mathematical proofs, or analysing complex financial risks.